See the latest portfolio at https://dgeisert-portfolio.lovable.app/

Prototyper working AI and XR, former Meta, building AI product with AI tools.

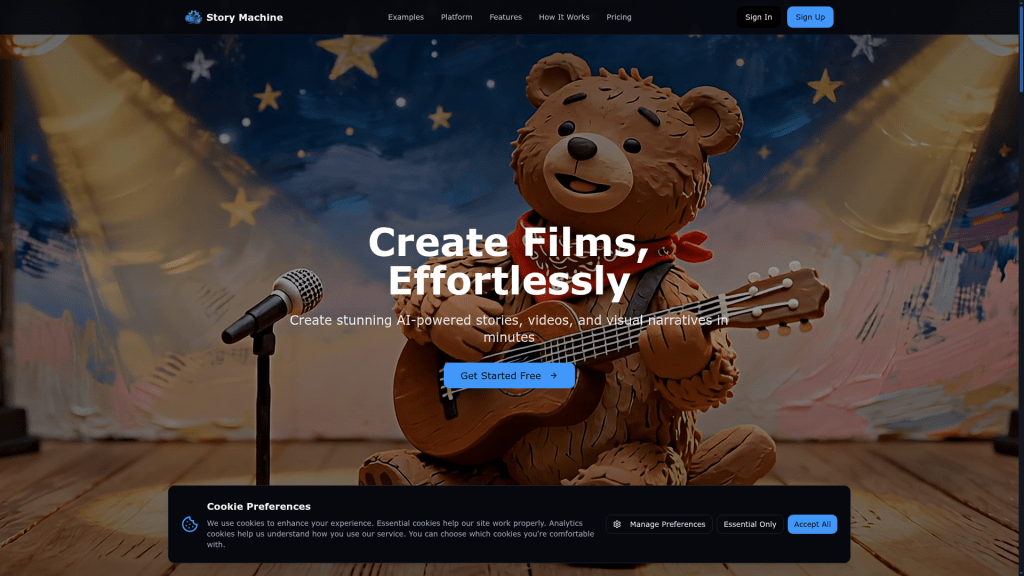

Story Machine AI

Story Machine AI is a full-stack AI filmmaking platform I conceived, architected, and built as a solo founder. The goal was to take a user from a simple idea to a fully assembled short film — including characters, scenes, video clips, voice, and lipsync — without the need for a traditional studio. The core hypothesis: the generative ecosystem (reference-based image models, affordable video generation, audio synthesis, lipsync, LLMs) had matured enough to support a structured filmmaking pipeline rather than isolated single-shot generation. I built the system to production in ~1 month to MVP, hired two developers and a marketer, and shipped a live product. Ultimately, while the system worked well technically, the market timing and my lack of a strong marketing co-founder limited growth. This project represents my strengths as: AI systems architect

- Rapid prototyper

- Full-stack product engineer

- Founder who can ship complex AI systems to production

The Problem

Most generative media tools fall into one of two categories: Low-level tools — powerful but manual (generate one image/video at a time)

Fully automated tools — easy but rigid (little control over structure or assets)

There was no system that: Managed characters, environments, and objects as reusable assets

- Preserved consistency across shots

- Structured a story before generating media

- Allowed iteration without losing control

Independent filmmakers had the models — but no workflow.

The Product

Story Machine AI structured the filmmaking process into a multi-stage AI pipeline: 1. Concept → Story Structure LLM-driven story development

- Scene breakdown

- Shot planning

- Prompt generation for visual consistency

2. Asset Creation

Reference-based character generation

- Setting and object management

- Version history for iterative edits

- Multi-model support for different aesthetic outputs

3. Shot Generation

Start frame prompt engineering

- Video generation prompts derived from story structure

- Model selection control

- Iterative refinement tools

4. Assembly

Video stitching

- Audio layering

- Voice alteration

- Lipsync integration

- Advanced editing controls

The system allowed both: Seamless “guided” creation

Deep manual control for power users

Architecture & Technical Design

Stack Frontend: React + Vite

- Backend: Supabase

- Deployment: Vercel

- AI Layer: API-based LLM and media model integrations

- Built with: Cursor for accelerated backend/API integration

Originally, I began building in Unity. After prototyping, it became clear that a web-first architecture was necessary for iteration speed and accessibility. I threw away the Unity implementation and rebuilt the system in React — a critical early architectural pivot.

AI Orchestration Layer

The core technical innovation was a structured LLM-driven workflow. This was not a simple “chat wrapper.” It was a multi-step prompt-engineered pipeline with state awareness: Story memory

- Asset references

- Shot-level continuity

- Context-aware prompt construction

- Model-specific formatting logic

Every generation step was mediated through carefully constructed prompts designed to: Preserve character consistency

- Maintain scene coherence

- Reduce drift across video segments

- Balance creative flexibility with structural control

I designed the orchestration system architecture and used Cursor to implement and scale API integrations efficiently.

Asset Management System

One of the strongest aspects of the platform was the asset system: Character reference tracking

- Version history

- Setting and object state preservation

- Cross-scene reuse

- Shot-to-shot continuity management

Unlike most generative tools that treat each image or video as independent, Story Machine AI treated assets as first-class objects within a structured narrative graph. This reduced overhead while increasing creative control.

Real-Time Generative Video Stream

One of the technical achievements I’m most proud of: We built a live video stream where: Viewers could comment in real time

- The system would update the generated video within ~6 seconds

- Video was generated using Memflow and LTX2-based generation

This required: Fast prompt regeneration

- Context compression

- Model response orchestration

- Near-real-time media updating

It demonstrated that generative storytelling could be reactive, not static.

My Role

As CEO and primary developer, I: Conceived the product vision

- Designed the UX

- Architected the full system

- Designed the AI orchestration layer

- Implemented much of the backend using Cursor

- Led product iteration

- Hired and managed two developers and a marketer

- Directed product strategy

I hired a contractor to deploy a staging environment and assist with DevOps. I built the MVP in approximately one month.

Prototyping & Iteration

Speed is one of my core strengths. MVP built in ~1 month

- Multiple UX iterations

- Rewrote architecture after abandoning Unity

- Rapidly integrated evolving AI APIs

- Built, tested, discarded, rebuilt — repeatedly

One example: The early video editor was tightly integrated with asset management. It became clear that the complexity of video editing was constraining the broader asset system. I separated concerns and redesigned the architecture to protect core system flexibility. I prioritize identifying the core problem rather than getting attached to proposed solutions — either my own or users’.

Results

Product shipped to production

- Live users

- Generated complete short films

- Small revenue

- Partnership with ByteDance on a short movie

- No external funding

While the platform worked well technically, growth was limited. Two main factors: The market for structured AI filmmaking workflows was still early.

As a solo technical founder, I struggled to find a strong marketing/sales co-founder.

I chose not to aggressively pursue fundraising without strong go-to-market support.

What This Project Demonstrates

AI Systems Thinking I don’t just call models — I design structured workflows around them. Rapid Prototyping Idea → MVP → production in ~1 month. Product Intuition I identify workflow friction and redesign architecture accordingly. Technical Adaptability Rewrote architecture when Unity proved limiting

- Integrated evolving AI APIs quickly

- Balanced automation with user control

Founder-Level Ownership

I’ve shipped a full-stack AI product end-to-end.

Why This Matters for AI Engineering Roles

Story Machine AI demonstrates that I can: Architect multi-stage LLM systems

- Build full-stack AI products

- Design structured generation pipelines

- Manage state and continuity across AI outputs

- Iterate quickly in fast-moving model ecosystems

- Lead small teams while staying hands-on

Even though the market timing wasn’t ideal and I lacked a strong marketing partner, the technical system worked — and shipping it gave me deep experience building real AI infrastructure under real constraints.

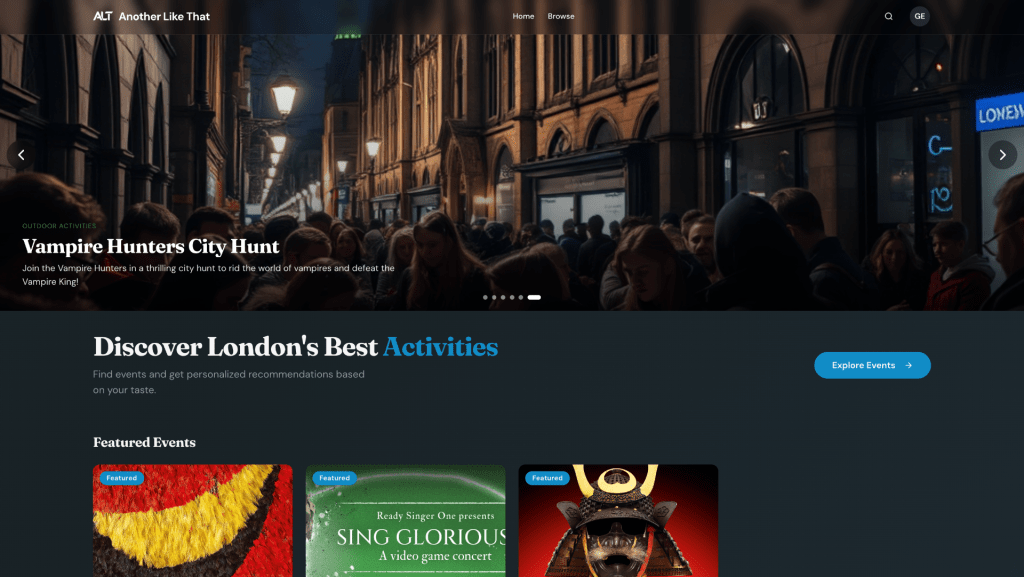

Another Like That

Another Like That is a live recommendation engine designed to help people in London discover events that are similar to what they already love — especially the lesser-known ones. It began with a simple frustration: London has thousands of events happening every week, but discovery is dominated by a handful of promotional platforms pushing the same visible experiences to everyone. I wanted to build something different. Not a promotional feed. Not a static directory. But a living system that understands similarity.

The Problem

Existing platforms like:

- Time Out

- Secret London

- Tripadvisor

They are not built for true personalization. They are built for distribution. Meanwhile:

- Smaller venues are hard to find

- Recurring but changing events (comedy nights, talks, meetups) are messy to track

- There isn’t even a clean, structured list of all museums in London

The discovery layer is broken.

My Role

I am the founder and CEO. I originated the idea, defined the UX, architected the system, and designed the brand. I operate as:

- Systems architect

- Product strategist

- Primary UX designer

- High-fidelity prototyper

I hired an engineer for the heavy implementation lift, but I defined the architecture and core logic.

From Idea to Live in 3 Weeks

Speed matters. We went from concept → live product in three weeks. I design in high fidelity from day one. With modern tools like Cursor and code-first iteration, fidelity is no longer expensive. In fact, it surfaces real product problems earlier. Low-fidelity wireframes hide complexity. High-fidelity surfaces it. We iterate daily.

The Hard Problem: Modeling the Real World

The real challenge wasn’t building a website. It was modeling the chaos of real-world events. Events are not uniform objects:

- One-time theatre performances

- Weekly comedy nights with rotating performers

- Museums open daily with fixed hours

- Seasonal exhibitions

- Multi-day festivals

Initially, we considered modeling each instance of every recurring event. That was a mistake. It would have exploded the system with unnecessary complexity. Instead, I made a structural decision:

- Separate Venues and Events

- Venues are persistent entities

- Events are occurrences tied to venues

- Venues can surface independently in search results (e.g., museums)

- Recurring events are modeled structurally, not instance-by-instance

This abstraction reduced system complexity while improving user clarity. It was a product simplification that made the entire engine viable.

The System: Building for Similarity

Another Like That is not a directory. It is a similarity engine. Under the hood:

- Events and venues are scraped from structured sources

- Data is normalized and cleaned

- Text and metadata are embedded into vectors

- Similarity is computed using vector comparison

- User interactions refine preference modeling

The systems work together to create some nice user flows. This allows us to:

- Surface things “like this” instantly

- Avoid stale manual tagging systems

- Detect new events as they appear

- Continuously expand coverage

- The system is designed to scale:

- Geographically (London → other cities)

- Vertically (events → restaurants → exhibitions → communities)

London is the wedge. The architecture is portable.

Design Philosophy

Two principles guided the UX: 1. Pages Should Feel Alive No static directories. No dead lists. The system updates as events change. New items surface dynamically. 2. Don’t Overload the User Discovery should feel serendipitous, not exhausting. Instead of:

- Dense filters

- Promotional banners

- Information overload

- We lean on:

- Structured similarity

- Clear grouping

- Interest-based surfacing

The product works even before deep personalization — which surprised me. That was an important signal.

Rapid Prototyping & PMF

I care more about speed than theoretical completeness. We do not build complex systems without a clear user need. The best example: We avoided modeling event instances once we saw the cost-to-value mismatch. Our current hypothesis: People want a better way to discover smaller, interest-aligned events. We are testing:

- Repeat visits

- Engagement depth

- Exploration behavior

It is early.

But even without monetization, the engine became useful quickly — faster than expected. That’s the signal I’m watching.

What This Project Says About Me

I am not just designing interfaces. I am designing systems. I think in:

- Data structures

- Similarity modeling

- Scalability

- Wedge strategies

I build fast to find truth. And I simplify aggressively when complexity does not serve users. Another Like That is an early-stage product. But it demonstrates:

- Architecture thinking

- UX clarity under complexity

- LLM-powered infrastructure

- Vector-based recommendation systems

- Rapid founder-led execution

It is a living experiment in finding product–market fit. And it’s just the starting point.

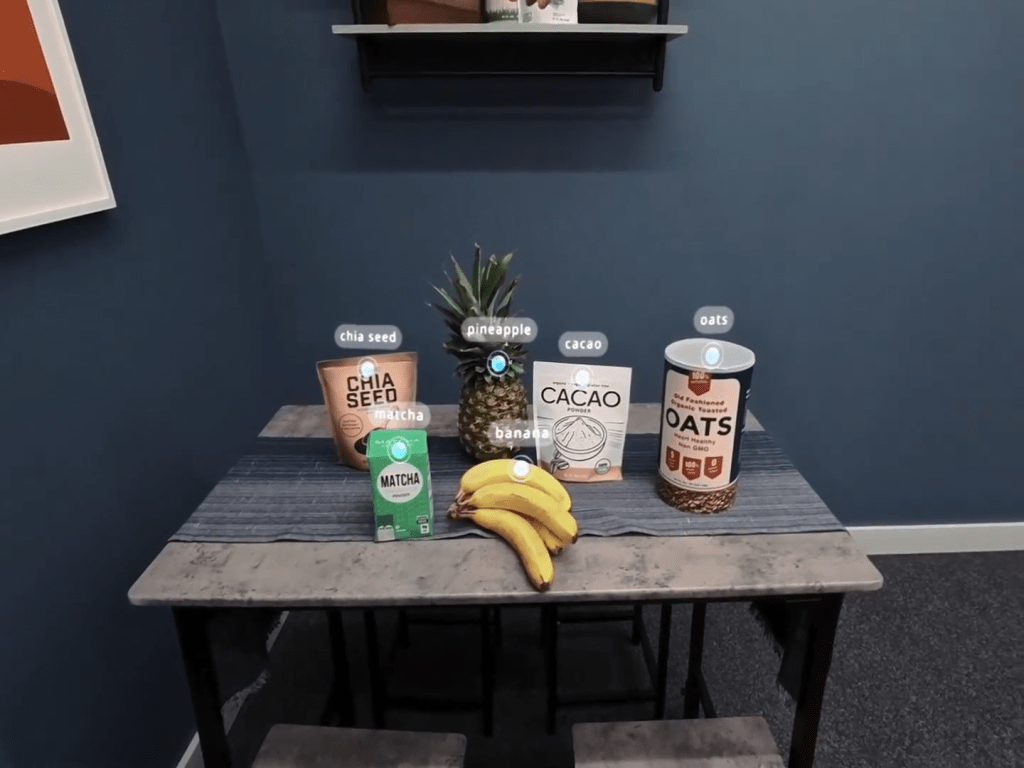

Made systems for pinpointing objects in 3D space on AR and VR headsets. These systems can track objects as they move and provide the outlines of the objects being identified.

I made many applications for the Orion AR glasses, and worked on the messaging and AI aspects of the glasses.

Make and share stories with the help of AI

I designed and built colocation safety systems for Quest.

Made a blog on AI writing under a pseudonym.

I made a VR game, which was one of the first post launch titles for the Quest.

AI Videos

Weekend Projects

These projects were done over weekends. Many of them are from Game Jams.

I illustrated and published a version of Alice’s Adventures in Wonderland writing under a pseudonym.

Convince AI agents to support you over your rival, Henry.

Hexagon Tactics: The Expanding Arena

Real Time AI that would create the characters and enemies to battle: names, descriptions, and art.

Using AI to create a card base roguelite island builder.

I used AI to make the art and narration for an experience about my wife’s cave diving.

I made a interactive visual experience, focusing on boid behavior and shaders.